Autonomous AI Web Security

Evidence-first autonomous web security assessments

Layer8 combines autonomous UI exploration with dynamic plan generation to automate web security testing. Instead of spamming noisy alerts, we deliver reproducible, evidence-backed findings so engineers can jump straight into remediation.

Focus

Evidence-first automation that prioritizes clarity, proof, and fix-ready output over raw scan volume.

Perfect for

Teams shipping web apps weekly or daily that need trustworthy security signals without slowing releases.

3-minute demo: end-to-end flow

Watch the agents explore, generate plans, and validate a target in one continuous session.

What this demo highlights

- Agents explore the UI end-to-end just like a human operator

- Observed context instantly feeds into a living test plan

Walkthrough

An evidence-first workflow broken into six simple steps.

Step 1 — Target & Scope

Define the target URL, allowed domains/paths, and guardrails (rate, concurrency, timeout). The engine never leaves the authorized scope.

Step 2 — Autonomous Exploration

Agents drive the web app like a user while collecting context.

- Screen transitions and UI states

- Input surfaces (forms, queries, parameters)

- API calls triggered while interacting

- Authorization boundaries before/after login

Step 3 — Plan Generation

The exploration map becomes a prioritized test plan that adapts to the application's responses—no static checklist.

Step 4 — Controlled Testing

High-signal surfaces receive carefully scoped tests, such as:

- Access control and authorization seams

- Injection or malformed input risks

- Configuration mistakes, debug remnants, data leaks

- Client-side data handling hazards

Step 5 — Verification (Evidence-first)

Candidates are never reported blindly. Each one is validated with logs, HTTP traces, or replayed scenarios to keep false positives near zero.

Step 6 — Deliverables

Findings ship in multiple formats so teams can act immediately.

- Human-readable report: impact, evidence, reproduction, guidance

- Evidence bundle: log/traffic snippets for audits or retests

- Structured output: ticketing / CI integration (optional)

For architecture, runtime, and guardrail specifics, visit /technology.

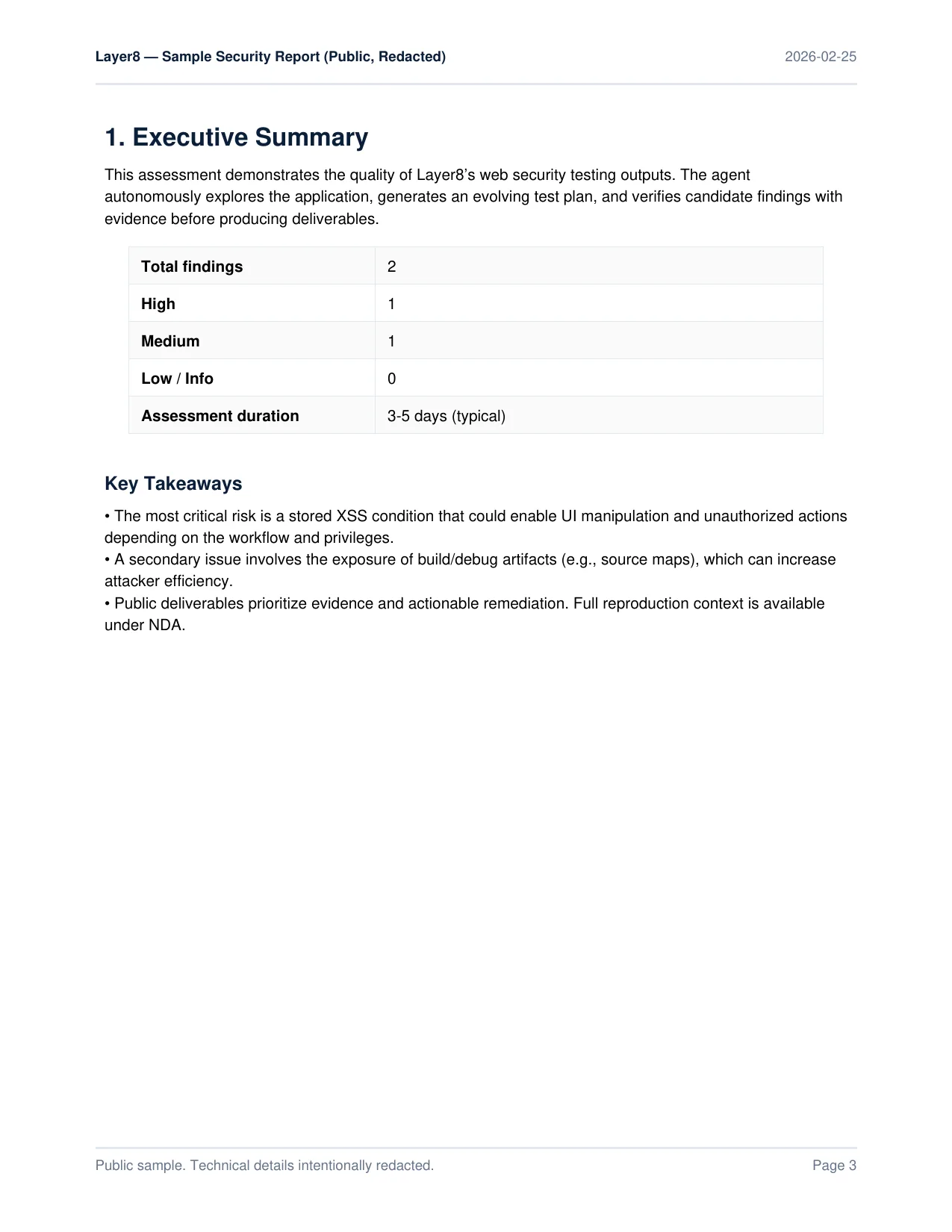

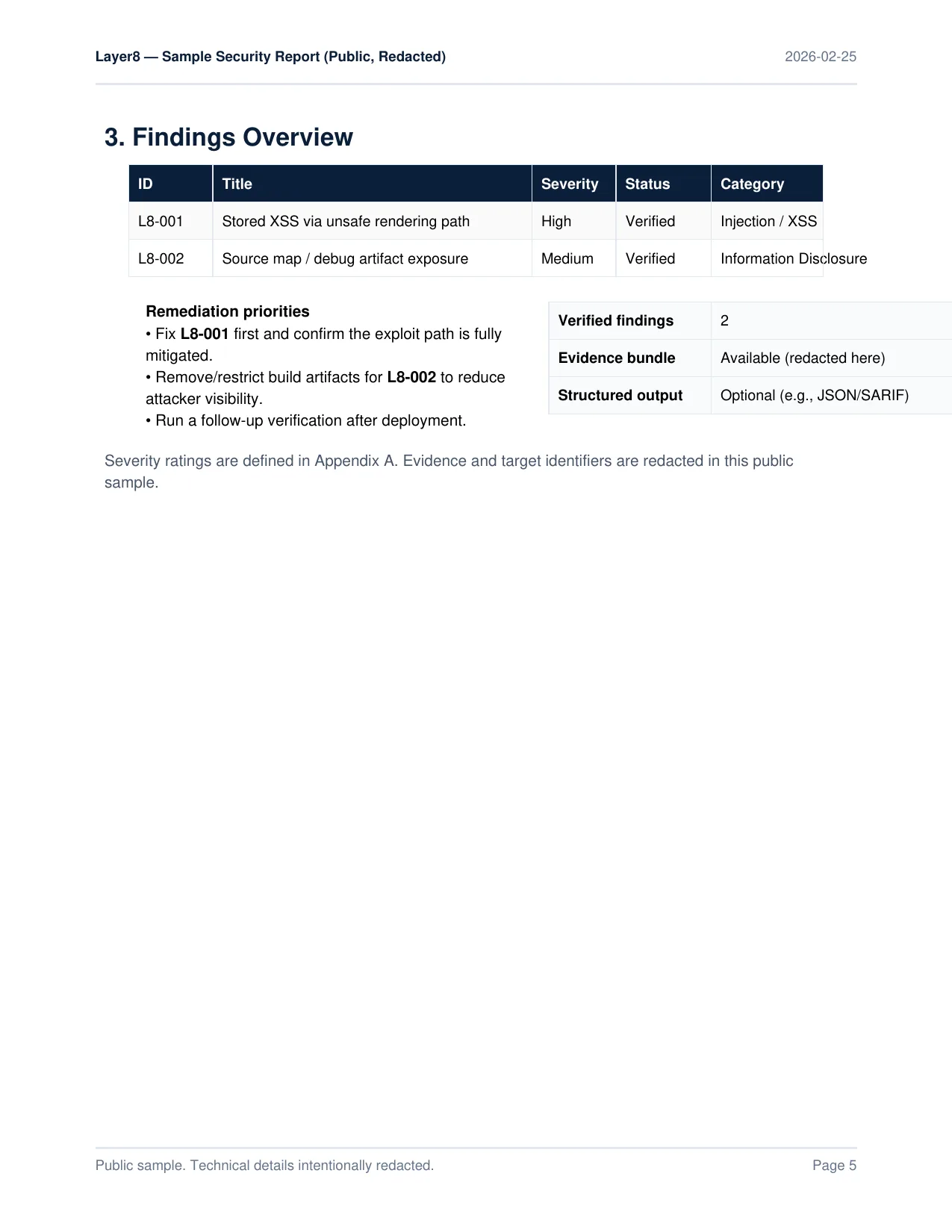

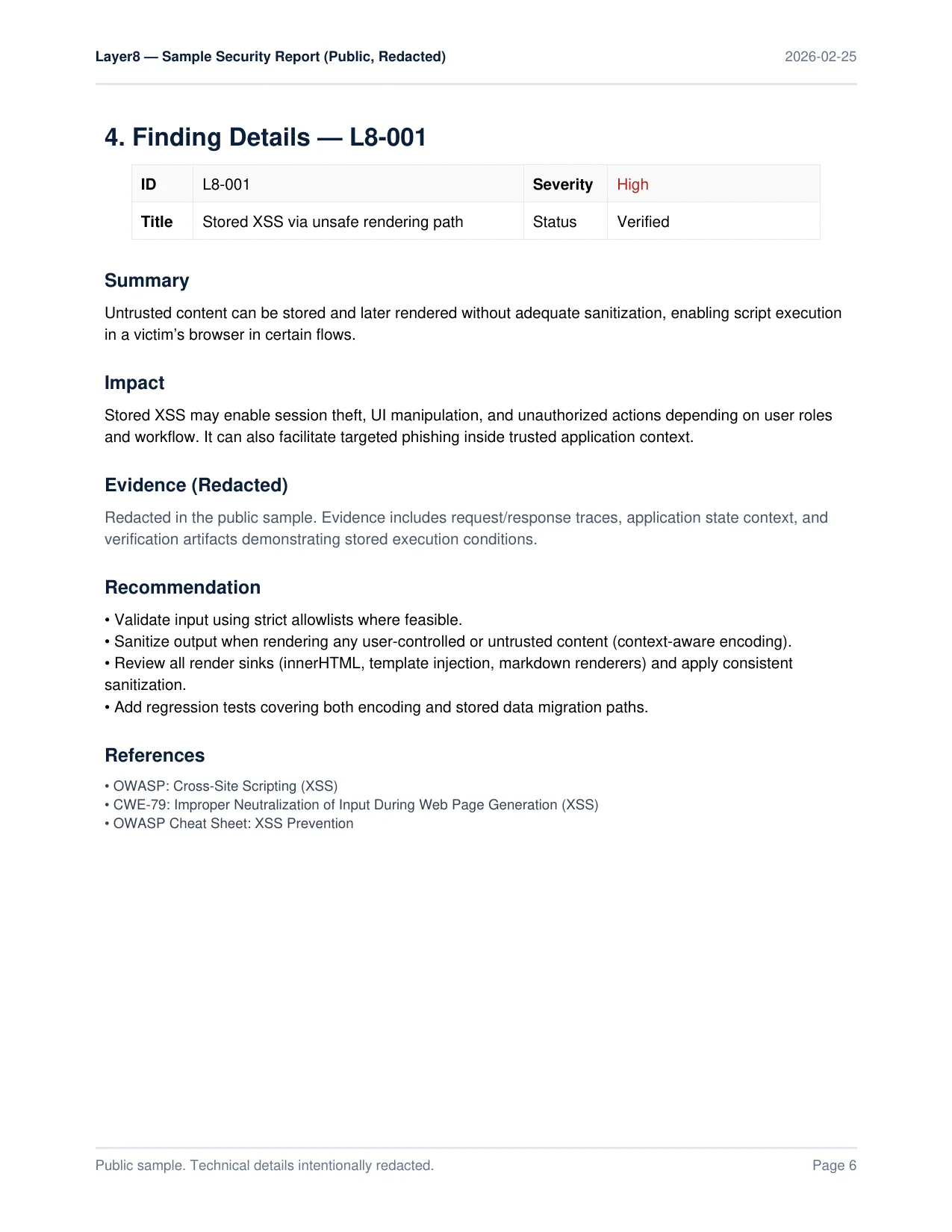

Sample Report (English, Redacted)

Public-safe glimpse of deliverable quality. Full reports share complete evidence and reproduction detail.

Safety & controls

A default-deny posture keeps every run inside the authorized scope.

- Scope enforcement: requests cannot leave the allowlisted domains/paths

- Load controls: safe defaults for rate, concurrency, and timeout

- Auditability: detailed execution logs and evidence for review

Delivery models (API-first)

- Single engagement (pentest-style)

- Recurring assessments

- CI/CD integration with structured output

FAQ

Do you replace a manual pentest?

We automate the discovery and verification loops so humans can focus on judgment and chaining. Most teams run us alongside expert testers.

Do you guarantee full coverage?

No. The goal is high-signal, reproducible findings—not a completeness claim.

Can you handle authenticated flows?

Yes. We support multiple auth schemes and validate requirements during the PoC stage.

Request a PoC

Share the following so we can scope a pilot quickly.

- Target application (auth/no-auth, environment details)

- Engagement type: single, recurring, or CI-linked

- Desired PoC timeline (e.g., 2-week pilot)

Email contact@layer8.jp or use the form.